| Archive Blog Cast Forum RSS Books! Poll Results About Search Fan Art Podcast More Stuff Random |

|

Classic comic reruns every day

|

1 Dwalin: So whut's a djinn, then? {translation: So what, pray tell, is a djinn?}

2 Alvissa: A djinni. The singular is djinni, the plural is djinn.

3 Alvissa: Legend says they are powerful magical beings made of smokeless flame.

4 Kyros: Made of fire? Well that's just showing off.

|

First (1) | Previous (3430) | Next (3432) || Latest Rerun (2862) |

Latest New (5380) First 5 | Previous 5 | Next 5 | Latest 5 Fantasy theme: First | Previous | Next | Latest || First 5 | Previous 5 | Next 5 | Latest 5 This strip's permanent URL: http://www.irregularwebcomic.net/3431.html

Annotations off: turn on

Annotations on: turn off

|

I remember the time I first made the connection: djinn is the plural of djinni - which sounds similar to... genie. It's actually the same word, just transcribed from Arabic a bit differently.

Djinn are mentioned in the Quran, but it's a bit difficult to understand exactly how they are defined without understanding Arabic. Different English translations refer to the djinn as being created by Allah from "smokeless fire" or "scorching fire" or "essential fire" or "the fire of a scorching wind" (Quran 15:27).

Either way, they're probably beings that Kyros really wants to mess with some day.

Okay, I was having trouble making a connection to the comic. But I want to talk about programming. This is something that I do in my job, and to set up and run things like this web site. But I'm not exactly a "computer programmer" in the fully professional sense of someone who writes computer code for a living - for me it's really just an incidental tool that I use to achieve the things that my job and hobbies are really about.

And this is kind of the state of programming today. It has become a tool that many people use to achieve other tasks, similar to using Microsoft Office to write documents, or Adobe Photoshop to edit images, except to do more customised tasks that programs like those can't do. In a sense, programming has always been a tool, not en end in itself, but you can draw a distinction between people writing programs to achieve a specific outcome, versus people writing programs to produce a piece of software that can then be used by other people or systems as a tool itself.

My first exposure to programming was in primary school. At that time, personal computers were a very new thing. I'd heard about computers and the cool things they could do, but the first time I ever saw one was on a visit to the old Museum of Applied Arts and Sciences in Sydney (which later transformed into the Powerhouse Museum). They had a display in which you could play a game of noughts and crosses against a computer.

The interface was a giant grid with light bulbs behind each of the nine squares that lit them up in the shapes of circles or crosses, and a hole with a button in each square that you would press to make your move when it was your turn. Behind this was a huge box, which was where the computer did its thinking. Despite the simplicity of the game, this was a fascinating thing for a kid interested in technology, and I spent quite some time playing game after game with it, determined to beat the thing. (You can see the actual machine and its description in this museum archive page.)

It turned out that the machine had not been programmed optimally, because I discovered that it was possible to beat it if you played a specific sequence of moves. For some reason the programmer had included a fixed response pattern to this sequence of moves, which would let the human player win (probably so that museum visitors would at least have some chance against the thing).

Anyway, after seeing this amazing demonstration of what a computer could do, I sought books in my school library. I found a book titled something like "An Introduction to BASIC Programming". I borrowed and read it, but it took some serious head-scratching to figure out what it meant. Not actually having access to a computer to try any of the programs or commands listed in the book, I had to make some wild assumptions about how a computer worked before I could even understand that you could instruct the computer by typing a bunch of numbers and words into it. For me, a computer was still just a mysterious box of wires - I had no idea whatsoever how they worked.

My first "computer" programming experience, then, was writing a very simple program in BASIC, on paper, with a pen. And then "running" it by pretending that I was the computer: reading one line of my code at a time, and (sometimes referring back to the library book) working out what I had to do based on that. I stepped through the code, following each instruction, adding some numbers and "printing" some output by writing it down with a pen. It seemed to work, but I remember being unsure if I was doing something wrong or how this actually translated into using a real computer.

A year or two later, one of my friends (whose parents had a lot more money than mine) got an Apple II computer. I used any and every chance to go over to his place and play games on it. And occasionally we wrote little programs, usually just to do really simple stuff like print the same text to the screen over and over until interrupted. It's amazing how amusing this is to adolescent boys not yet used to pervasive computer technology.

My next real experience with programming didn't come until I started university. We couldn't afford a personal computer when I was in high school, so I didn't really do much during those years, except for occasionally setting up an endless "David was here." print loop on a display machine in an electronics shop, while trying to avoid being nabbed by the sales assistant.

Part of Charle's Babbage's Difference Engine, forerunner of modern computers. Whipple Museum of the History of Science, Cambridge University. |

In my first year at university I learnt to program in Pascal and FORTRAN. Moving into the physical sciences, I only ever did the one year of computer science, so missed out on learning C and Perl and stuff like that in later years. Fortunately (for some definition of that word), the programming I needed to do later in my degree turned out to be FORTRAN, since that's what astronomers were using at the time to do their data analysis. But at the urging of a friend, I taught myself C, on the basis that he claimed it was a much better language. I thought so to, and - to the incredulous disapproval of my Ph.D. supervisor - I began writing all my data analysis code in C instead of FORTRAN.

I also started picking up bits of knowledge about tools like sed and then Perl, which made processing files of text and numerical data easier. These tools make use of regular expressions, which is a sort of sub-language for interpreting strings of text in a very general way. Regular expressions make use of keyboard symbols to stand for various text characters or combinations of characters. This allows you to, for example, write a concise piece of code to tell whether an arbitrary piece of text is a correctly formatted date, in whatever date format is desired. Or a street address, or a price, or whatever. This is very useful when handling many types of data. In my case, I'd be reading numerical data and checking formats and matching them to text labels, and so on, in order to perform statistical analyses or make graphs.

Perl is also used to run this very website. I wrote much of the original code for this site using Perl, which performs matching on the HTML code of the web pages to extract things like comic strip numbers, transcripts, annotations, and so on, and reformat them to produce the archive page, the RSS news feeds, and all the other stuff that happens automatically during an update. Originally it did all the work on flat text files. Over the years I've replaced much of the Perl with PHP scripts and an SQL database, but there are still snippets of Perl floating around doing some of the original jobs.

Programming has evolved a long way since the days of BASIC and FORTRAN. But even these old dinosaurs were considerable advances over what came before. When computers were really new, they were programmed by changing hardware connections with wires. The first software programmable machines had to be programmed with limited instruction sets that referred to low-level concepts such as the specific memory registers, and things like incrementing specific counters and so on. These instructions were specified using machine code, essentially a mapping from specific binary sequences to the low-level instructions that controlled the flow of data through the machine.

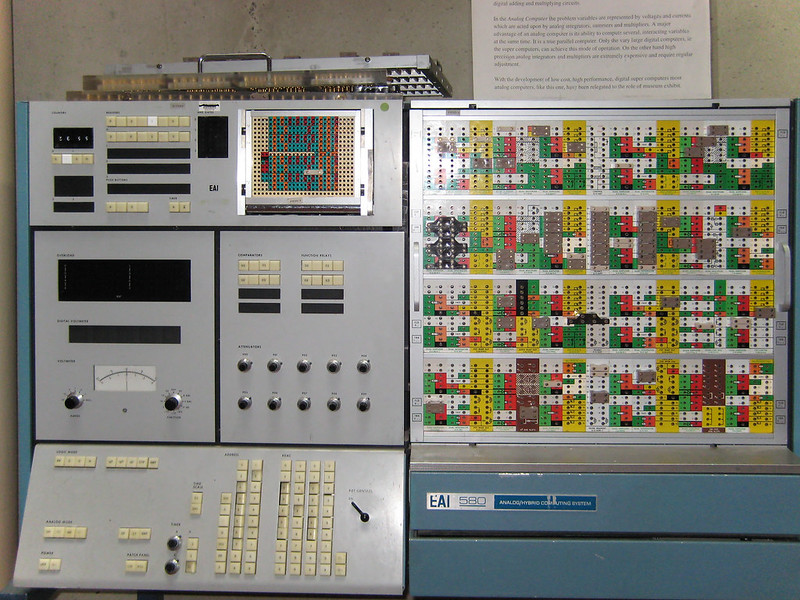

An old analogue computer. Museum display at Australian National University. |

Since this was difficult for human programmers to work with, machine code evolved to produce assembly language, in which the machine instructions are referenced by mnemonic text abbreviations that humans find easier to remember. Assembly language essentially provides a straight mapping of symbols into machine code, but does not provide advanced constructs for doing routine tasks that require many machine instructions. For example, adding two numbers might require a dozen or so machine instructions, implementing a loop over the digits. To program this in assembly, you need to specify all of the dozen instructions in the loop, just using more human-readable codes.

This is where FORTRAN and BASIC enter the stage. These are so-called high-level languages, which allow you to simply specify a single operation to add two numbers together. This accords much better with human thinking, but there is a problem giving such an instruction to a computer. To achieve this addition, the processor still needs to execute a loop of a dozen or so instructions. To bridge this gap, the high-level addition code first has to be compiled or interpreted into machine code. The thing that does this job is another piece of computer code, known as either a compiler or an interpreter.

A compiler processes the high-level computer code written by a human, and writes out a new file composed of machine language instructions that the computer processor can understand directly. An interpreter does essentially the same job, but the difference is that an interpreter translates the code into machine language while the program is running and feeds it directly to the processor, whereas a compiler does it beforehand and writes the machine code to a file for later use.

High-level languages have different features which make them more or less useful for specific computing tasks. They also have various quirks which make them easier or harder to learn to use efficiently. So over the years, many languages have been designed for different jobs, and many have fallen by the wayside when they turned out to be too difficult or limited to be of much use. So some languages turn out to be generally useful and become popular, and then there is a range of more specialised and niche languages. (And then there are esoteric programming languages, which are deliberately designed to be obscure, difficult, artistic, or otherwise bizarre. I've designed some of these myself.)

This is kind of the point where I baled out of learning about programming. I briefly had a job building commercial web sites, and started messing with automatic programming, which is a way to write your high-level code faster. In many cases, high-level computer code contains a bunch of constructs that are standardised or repetitive. Getting a human to actually type these out can be a waste of time, especially for big coding tasks. With code generation, you use a templating language to specify what the bits of code you want to write need to do, and then you run a code generator over your template, and it spits out the computer code for you.

If you set this up right, it can save considerable time and effort, but the level of abstraction is now one higher than a high-level programming language. And as I discovered when I tried to get to grips with it, it's one level too high for me to deal with comfortably. You're writing code that another piece of code will translate into another code, that another piece of code will then translate into yet another code that the machine actually understands, to - hopefully - do the job you wanted. Some people thrive on this sort of stuff, but I have to say I'm not one of them.

And there's my potted, personal history of computer programming, from my point of view. Nowadays I program as little as I can get away with. I know a lot of people enjoy programming just for the sheer fun of constructing efficient or tricky code and getting it to work, giving them a great sense of achievement. Fortunately for the rest of us, since there's computer code behind almost everything we do today!

|

LEGO® is a registered trademark of the LEGO Group of companies,

which does not sponsor, authorise, or endorse this site. This material is presented in accordance with the LEGO® Fair Play Guidelines. |